Big data is a popular term used to describe the exponential growth and availability of data, both structured and unstructured.

– SAS Software

“Big data,” like most buzz terms, seems overwhelming and too big to employ successfully without some context and advice from experts. In fact, when I first heard the term, I had no clue what it actually meant. I assumed “big data” referred to collecting a lot of data within a big company with a major budget. After Googling the term, I found that my original assumption can be correct, but there are many definitions, explanations and suggested uses for big data. My curiosity, and need to validate my assumptions were big factors in my decision to attend the Measurement and Analysis track sessions at Intelligent Content Conference 2014.

As a contractor specializing in eLearning and technical communication, I often find that the scope of my projects lack a measurement and evaluation component of any kind; when used, we rarely gather data that helps us shape communication for the audience or our paying clients.

In eLearning, projects often include measurement and evaluation methods based on instructional design models, with the evaluation phase tacked on at the end to attempt to measure an entire effort after the fact.

In tech comm, efforts to design based on user data and feedback are often crushed by senior management’s desire to just build and deliver more pretty stuff that fits nicely into their presentations.

To get out of the mindset of measuring the wrong things or nothing at all, it was fortuitous for me to spend time in the Measurement and Analysis track at ICC and learn how modern applications of data and measurement can improve user experiences and build better relationships. I uncovered three questions that everyone in tech comm and content creation should be asking about evaluating our work using data:

- Why are we gathering the data? A common concept delivered throughout ICC and other sessions, countless webinars, and years of research is that we gather data because we’re supposed to, but most often without meaning, reason or business value.

- Are we measuring effectively? We also tend to believe the numbers over human analysis because we assume the numbers are more accurate. Without analysis of the numbers and what they actually represent, we don’t understand the situations or draw meaningful conclusions to shape future decisions.

- How do we make decisions? In the Big Data: Metrics, Myths, and Power presentation at ICC, Jennifer Fell shared statistics from IBM’s Big Data Hub stating many people are unsure just how much of their data is inaccurate and one in three leaders doesn’t trust the data being used to make decisions.

That’s scary to contemplate…and I thought it was just me who wasn’t sure on the validity of the stuff I sent out!

Modern Applications of Data and Metrics to eLearning and Technical Communication

As communicators and content developers, we need to move past the typical measurement of how many people complete a course or how many readers click through an online document if we want to build better learning and communication products for our audiences and clients.

Where there are problems, there are opportunities. I got to thinking about my own project work in eLearning, technical writing and copywriting and wondered how I could make a better business case for using data to create better results in our projects.

Ask What, Why and Whether. Before designing and developing any communication or learning effort, we really should determine if it is even necessary, before we spend valuable resources figuring out the best way to deploy. Just because we have the shiny tools to animate .gifs and make everything clickable/interactive does not mean we should. That means asking tougher, more fundamental questions at the outset. If we want actions to change, such as how someone enters information into a software application or how they describe a product to customers, is a flat PDF communication really instructing them? We should determine, through methods such as providing and evaluating surveys and reviewing the number of help desk calls (and outcomes) around a particular issue, what the actor (your customer or end user) already knows and does, then match communication and training to change actions and improve behaviors.

To build a complete and useful set of eLearning courses, we should gather data on our learners, including their backgrounds, expectations, communication preferences and motivations. We can then shape our learning efforts to meet audience and client needs. It’s an approach that’s orders of magnitude better than pushing out yet another multiple choice exam to learners or inviting managers to complete one more high-level survey.

Start Small. We need to pick something to measure as a benchmark and make the business case to project sponsors, bosses, whomever that it is better for the clients/customers if we measure that thing solidly rather than either the wrong thing or nothing at all. As Shawn Prenzlow shared at ICC, when we get a good feel for this first item, we can add more items to track and measure.

Ideas for applying this to communication and learning environments could be as simple as grabbing one procedure after deploying it in a new format and measuring it against the number of help desk tickets received. But, we know we have more to do than just trust the numbers. An increase in help desk requests could indicate that the procedure was confusing, but maybe the new format was easier to navigate, so more people paid attention to it to begin with.

Jennifer Fell on Big Data at Intelligent Content Conference 2014

Leverage Rewards & Incentives. Jennifer Fell shared an example of how to take data and apply it to a group of people sitting in a conference session. If we knew who was attending a particular session, we could push a Starbucks coupon to them right before a break. (I secretly hoped this was going to happen before the session ended.)

We could also apply this to a learning environment. After completing a course, instead of just receiving a printable certificate, we could include a coupon, a link to a printable gift card or even a link to download a free trial version of some software. This could even build relationships with local vendors or software developers and lead to additional incentives on both sides.

Performance Improvement. Rather than measuring click-through rates or multiple choice exam results, we should measure the improvements to performance after someone has read through a communication piece or completed a course. We can grab actual statistics to see what behaviors and actions have changed, survey the audience to learn how changes could be understood and received more effectively and then modify our approach to improve performance and reception.

Personalization. We can use data to create a personalized user experience within social media and websites. It goes beyond a simple welcome message and into personalized recommendations for places to travel or new coffee to purchase. In learning environments, this personalization could show progression through a course and highlight particular areas of interest or concern by user.

Feedback Loop & Acceptance Rate. We can use the data we collect directly from our audience to improve our communication style and approach for delivering content. If a cleverly designed Word document is never opened, yet contains essential information for job performance, we need to know that it is not working and attempt to learn how to make the information work for the audience.

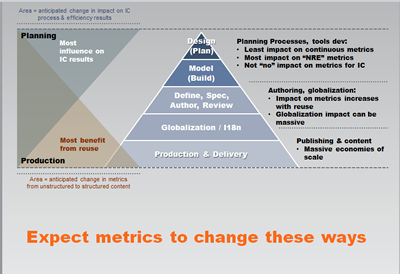

Shawn Prezlow covered how metrics change for intelligent content at ICC2014

Continuous Improvement. The data we measure needs to be audited to make sure it is still relevant. For example, if we measure attendance at an event during a specific date and time, we can use the information to shape the next event, but the exact measurement itself needs to be updated.

Plan for Governance. Communicators need to establish set of processes and people to measure efforts and ensure all parties are gathering and analyzing in the same way. In the projects I work on, the first step to creating any sort of governance structure is to get the right people from multiple departments working together. Right now, we have duplicate and inefficient efforts to measure unimportant items like the click-through rates on our supplementary (non-essential) communication pieces. An effective governance structure could eliminate these trivial metrics in favor of those that provide real value.

Understand Privacy Implications. So with the rewards and incentives and personalization comes the need to handle privacy appropriately. Questions surround how learners would respond to their information being tracked and pushed back to them? How would they respond to this in a traditional classroom environment vs. eLearning vs. customer service experience?

For example, I would be fine with the coupon being sent to me based on my conference session attendance. If I had already indicated online through a site such as Lanyrd that I was going to attend particular sessions, I know I have allowed that information to be visible online in a public space. However, if I was taking a continuing education course or something off-topic from my day job, I might not want that information used or shared.

By applying some of these simple approaches, we can better understand what makes our audience successful and how to create a unique and meaningful experience. As I review the ICC slide decks, attend webinars, and read white papers, I see the trend moving toward the ways in which modern technology helps us personalize user experiences as well as the sheer volume of data new technologies can track and manage. Companies and communicators who can master some current trends while anticipating what will come next should be able to gain competitive advantage while also improving the user experience.

I am curious to know how other instructional designers, technical communicators and others are applying data, results and personalized offers in unique ways to clients, learners and end users? Please post a comment or drop us a note here at TechWhirl.

Sources

Intelligent Content Conference 2014 Presentations

- How Workflow Metrics Change for Intelligent Content – Shawn Prenzlow, The Reluctant Strategist

- Creating Metadata Strategies: Structuring Content for Success – Rebecca Schneider, Azzard Consulting

- Big Data: Metrics, Myths, and Power – Jennifer Fell, Expert Support, Inc.

What is Big Data – SAS Software

3 Simple Ways to Measure the Success of Your E-Learning – Tom Kuhlman, Articulāte